Player Development: SARAH model updates

Introducing the concept of credible intervals in the SARAH modelling process

A few months ago, alongside Noah Chaikof, Katia Clément-Heydra, Carleen Markey, Adam Pilotte & Mairead Shaw we published a paper outlining a novel way of quantifying player habits: the SARAH framework.

Essentially, we looked at the various national women's hockey teams and identified the set of "habits" a player regularly executes (i.e., edgework, catching the puck in the hip pocket, pass placement, etc).

Combining the dataset of players' habits with a set of players' microstats (entries via pass/stickhandling, exits via stickhandling/pass, accurate/inaccurate passes, etc.), we developed models to accurately predict if a player possesses a certain habit based on their set of microstats.

We made specific improvements to SARAH models 1 and 2, which are outlined in the sections below.

SARAH 1 Updates: Hyper-Parameter Tuning

Threshold Change

As a reminder, the main purpose of SARAH 1 was to help us identify “relevant” habit-event pairs. We achieved this by leveraging the feature selection tool available to us within random forest regressors.

In these models, we had the events as the target variables and all 30 habits as the predictors. To identify the relevant habit-event relationships in the first version of the models, any habit with an importance above 0.0325 was considered to have a strong relationship with a given event.

The first change we made was to slightly increase this threshold to 1/30 (≈0.0333). Intuitively, since there are 30 habits, this threshold makes sense as it represents the average “importance” of a habit with respect to a specific event. Therefore, by using this threshold, we can say that we only select habit-event pairs that have an above average significance.

Trimming the Trees

Moreover, as its name suggests, random forests are comprised of trees (because that’s what we find in a forest, right?). In the first iteration of our models, the trees we had built were fully grown.

In the first iteration, we also selected a threshold that ensured each habit would be meaningfully connected to a minimum of five events. In iteration 2, to make the relationships clearer in our models, we decided to trim down the branches by limiting the depth of the tree to 5.

When adding this constraint combined with the increased threshold, we noted that our models performed better in the K-Fold cross validation test with the additional data available from the latest World Championship. As such, we made the change to max_depth = 5 in our models.

SARAH 2 Updates: Methodology Changes

Classifying the Habits

In SARAH 2, our main objective was to quantify the micro-habits of players by leveraging the meaningful relationships identified as part of SARAH 1. In the first iteration of our models, SARAH 2 was comprised of a set of random forest classifiers.

But for iteration 2, the first major change is related to the type of models we used to quantify habits. Instead of using random forest classifiers, we decided to leverage logistic regression models, which are computationally simpler and more interpretable than the “black boxes” that are random forests.

In order to successfully implement a logistic regression-based modelling framework for SARAH 2, we had to somewhat tweak the modelling thresholds in order to ensure the feasibility of our idea.

Going back to the basics, logistic regression is a classification model that assigns the value of either 0 (“habit is not performed successfully”) or 1 (“habit is performed successfully”) to our target habit based on a variety of predictors (events).

Logistic regression models assign this binary value to the target habit by calculating the probability that said habit is successfully performed. If the habit’s success probability is above 0.5, logistic regression models assign the value of 1. Otherwise, the value assigned is equal to 0.

But, with the SARAH models, we have 30 different habits that vary in difficulty level. Therefore, the average success probability of different habits might significantly impact the ability of players to successfully perform habits “more often than not”.

For instance, the average success probability of slip passes (a habit that is very difficult to perform successfully) is around 0.25. On the contrary, the use of linear crossovers to build speed has a success probability of 0.48, which is indicative of a habit that is much easier to perform.

Given the variability in the difficulty level of habits, we adjust for this difference by tailoring our threshold to each individual habit. As such, instead of using the traditional 0.5 threshold, the average success probability from our tracking is now our benchmark to differentiate habits that are performed “successfully” from those that are not.

Bayesian Conjugacy

Once our habits are classified per period for each player, we can transform this Frequentist model into a Bayesian one with the help of probability theory.

Bayesian modelling will allow us to incorporate the concept of uncertainty in our results. In other words, instead of saying that the success probability of a habit is equal to 0.59, we’ll be able to provide a reasonable range (credible interval) in which the habit can be found (i.e., between 0.51 and 0.64).

First, we need to talk about binomial distributions. Binomial probability theory helps us deal with problems where our random variables are discrete and take 1 of 2 values (0 or 1). Based on the number of successes (1) and failures (0), we get a binomial distribution characterizing the probability of successful performance of a habit.

What’s very interesting with the binomial distribution is that we can use it as our likelihood in a conjugacy example with beta distributions (a type of distributions dealing with continuous variables). We had introduced Bayesian conjugacy, earlier this summer, in the context of DEVe models.

Given the following equations, Bayesian conjugacy allows us to significantly simplify the math and obtain a beta posterior distribution with a beta prior and a binomial likelihood:

In short, our binomial likelihood, for a given player, stems from the discrete data extrapolated from our Frequentist classification model described above. Our beta prior stems from the fitted distribution of habits given the different success probabilities within the dataset from iteration 1 of our model (separated by Group A vs B).

The resulting beta posterior distribution will be tailored to each player’s ability to perform specific habits and will yield a continuous credible interval allowing us to quantify player habits.

Case Study: Taylor Heise

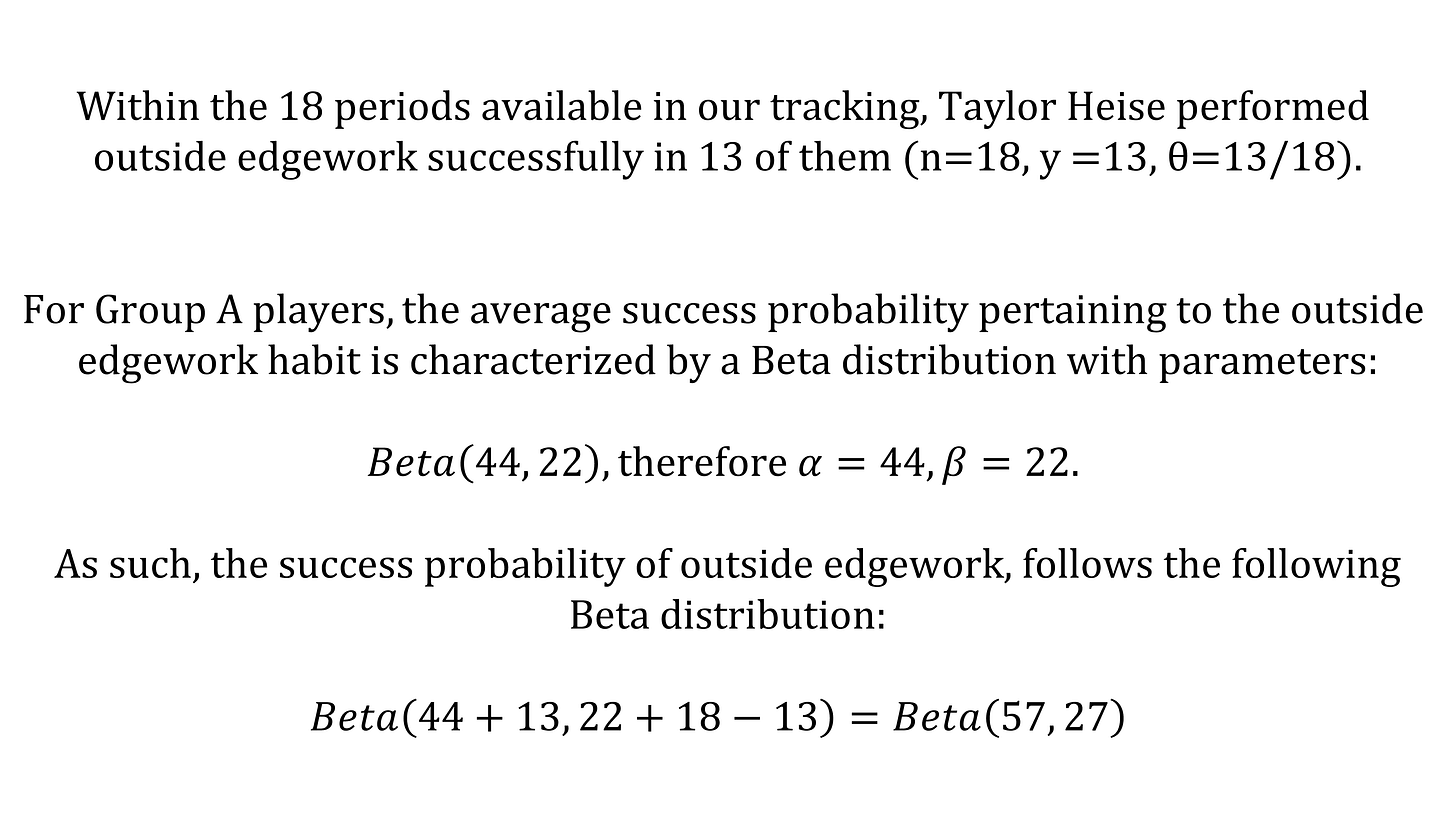

To illustrate our Bayesian model, we can take the following example with Taylor Heise’s outside edgework habit:

We re-perform this experiment with all 30 habits. That way, we can obtain the success probability distribution for all 30 of Taylor Heise’s habits. Below, we have the results of her habits grouped by skill set.

For each skill set, the blue-green part of the graph represents the portion of Heise’s skill set that is above the global average. All skill sets, comprised of different habits as outlined in the first iteration of our model, stabilize around 0.56 on average (i.e., the global average).

One minor change was made to the skill sets with the re-classification of shoulder checks to the puck reception skill set, given our optimization of skill blending patterns.

But, in short, Heise’s results (in the blue-green portion of the graph) show that she is already a force to be reckoned with at the International level. Given her elite underlying habits, it comes as no surprise that she was able to lead scoring in her first tournament and was named MVP of the World Championship.

If you are a coach who wants to learn more about how to leverage hockey analytics efficiently at any level, Jack Han & I recently released a course that may interest you.

In this 2-hour course, split into 9 different chapters, Jack & I share our experiences working with different organizations worldwide and discuss best practices when starting to use analytics in coaching.